Democracy Fails in the Age of Corporate Surveillance

We’re being watched. It’s time for us to start caring.

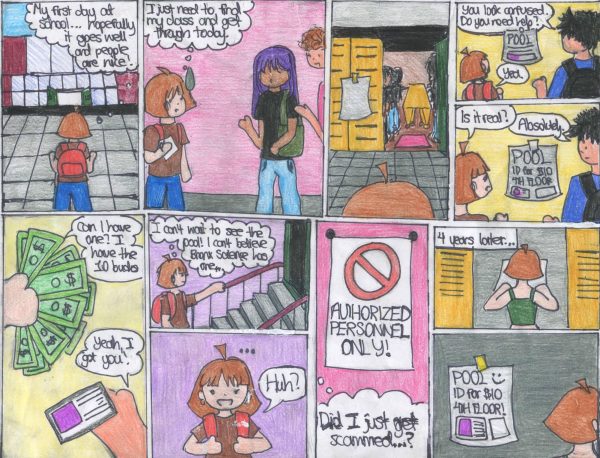

In spite of its privacy scandals, Facebook remains a popular social tool for students.

In 1997, at a meeting of the Federal Trade Commission, tech executives and government officials fiercely debated the ethics of a budding, rapidly growing industry. Civil libertarians urged that big data “posed an unprecedented threat to individual freedom”; tech executives retorted that they were capable of “regulating themselves.” Nearly thirty years later, we are faced with a tech industry that is an Orwellian shadow of its former self–still vying for self-regulation, still in denial of the threat they pose, now empowered by new money and ever-expanding influence.

Today, experts across the world–from renowned journalists to tech executives, themselves–warn that the behavior of the big data industry is nothing short of a “crisis.” Recently, the New York Times Editorial Board launched The Privacy Project, an ongoing collection of op-eds that paints a chilling picture of a hidden industry. The authors advocate for government intervention and corporate accountability, but more than anything, they urge the public to care.

If you’re an internet user and a consumer of digital media, it’s unlikely that you’ve gone unscathed by the byproducts of a surveilled digital world. You’ve shaken your head at eerily personal advertisements, clicked past the growing number of pop-up privacy agreements, and wondered—more in confusion than in anger—at Mark Zuckerberg’s address to Congress following the Cambridge Analytica scandal. But, like most, you’ve probably also found yourself silently repeating a universal mantra: I have nothing to hide.

The most startling truth about the big data industry, though, is that it does not matter whether the data that companies are harvesting from you is incriminating. Tech companies will utilize it in an unethical and anti-democratic way regardless of its content, because regulations surrounding how technology companies may use your data are virtually nonexistent.

Shoshana Zuboff, an author and scholar of technology, uses the term “Surveillance Capitalism” to describe the internet market created by a lack of government oversight. In an interview with The Harvard Gazette, Zuboff elaborated on the expression: “Surveillance Capitalism [is] the unilateral claiming of private human experience as free raw material for translation into behavioral data.”

In this new branch of capitalism, personalized advertisements or advanced location-based services are not what’s cause for concern, or what’s most lucrative to technology companies. But because these services provide value to the consumer, they give data mining the appearance of being a transaction–as if we are trading in a bit of personal information for something in return. This idea, while comforting, is far from true. There’s a rule of thumb that those familiar with the tech industry preach: If you didn’t pay for it, you’re the product.

Mac Tanner ’20, an avid supporter of digital privacy, illustrates the breadth of the technology industry’s reach: “Many websites collect cookies, which can include every website you’ve visited, the things you search, your location, what device you use and more. […] With the increase of smart home devices like Alexa and Nest cameras, your personal data can include things like what you discuss in your home and what time you leave the house and come home. Your own cell phone can be used to track and listen to you, regardless of [whether] it’s turned off,” he explained.

This is far bigger than companies using our search history to predict our behaviors. This is now about them using our data from every corner of our lives to influence our actions, eroding at our individual sovereignty. Zuboff explains, “Surveillance capitalists now develop ‘economies of action,’ as they learn to tune, herd, and condition our behavior with subtle and subliminal cues, rewards, and punishments that shunt us toward their most profitable outcomes.” Often, these manipulations may steer users to something more sinister than profit.

In 2016, Cambridge Analytica, a political consulting firm hired by the Trump administration, harvested raw psychological data from 87 million Facebook users to influence election outcomes. This was not a difficult or overly complicated operation; a researcher at Cambridge Analytica simply created a third-party Facebook app which granted the firm access not just to the data of each user, but of each of their Facebook friends. In total, the leak had the power to influence a group of people larger than the entire population of Turkey.

No sect of the world is untouched by surveillance capitalism, and certainly not the government. Last year, Amazon’s Ring, an internet-connected doorbell and home surveillance system, chartered a deal with police allowing them to access homeowner’s footage. By contacting Ring owners, local police may have access to footage of neighbors’ homes and property, without obtaining a search warrant. Yet this arguable violation of our Fourth Amendment rights is only the tip of the colossal iceberg that is modern government surveillance.

What happens when an ostensibly democratic government gains access to its citizens’ phone calls, search history, and whereabouts through facial recognition? We do not have to reach into the future to extrapolate the severe possibilities. From 1956 to 1971, the FBI ran a covert surveillance program called COINTELPRO, whose purpose was to monitor, infiltrate, and discredit any political organizations they deemed “subversive”–sometimes through illegal means. Most notably, they wiretapped the phone of civil rights activist Martin Luther King Jr. and sent a “suicide package” of tapes recording his adultery, with a letter blackmailing him to commit suicide. The FBI carried out operations like this with limited technological power, targeting activists from anti-War, environmental, and civil rights groups. In an era of extended surveillance reach, we cannot make the mistake of underestimating the corruption of our government.

Moreover, we cannot be naive enough to think that the government will voluntarily regulate an industry whose technology they themselves exploit. “This increase of the technological arm of the state will give the government the ability to use it against anyone they choose. It is in the government’s best interest to increase the collection of our data,” Tanner said. As we’ve seen with the fossil fuel industry, the government will allow unethical businesses to flourish if they can benefit from their profit. In theory, the government should implement strong regulatory policies that ban the collection of metadata and create third-party organizations to monitor the technology industry; yet this will never happen without mass, organized pressure from American citizens.

Unfortunately, what makes the tyranny of the data industry so dangerous is that it has successfully convinced the public of its benevolence. The services that we have come to value so dearly are the ones that have already begun to strip us of our freedom. By engaging with digital services, we are quietly building our own prison. Still, we must understand that many of the benefits of technology and its exploitive nature are not inextricably linked. There is a world where technology betters the human without exploiting our nature; it is up to us to determine if that’s the world we will live in.

“This increase of the technological arm of the state will give the government the ability to use it against anyone they choose. It is in the government’s best interest to increase the collection of our data,” Mac Tanner ’20 said.

Cameron Leo is an Editor-in-Chief of ‘The Science Survey’ and a Student Life Reporter for ‘The Observatory.’ Cameron has always loved to write,...