Our Future With Artificial Intelligence

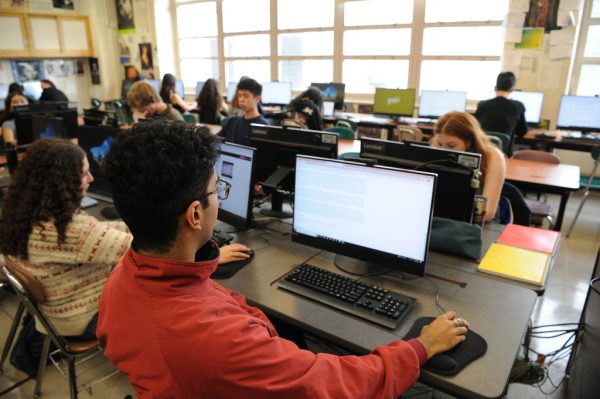

“There’s no doubt that AI is developing, technology-wise, at a very fast pace,” said Darius Barbano ’19.

We are in the age of technology and, more specifically, artificial intelligence. From self-checkout to face recognition systems, technology has advanced from its birth in the 1950s to its peak today, where it belongs in nearly every industry in the world.

Even though technology has become a major part of our lives, there remain questions about the future of technology and artificial intelligence (AI). AI can be of two types, narrow or general. Narrow AI is designed to do one particular task, such as filtering spam e-mails or showing recommended playlists, and it’s usually not viewed as problematic. General AI is what many are worried about, as it expands on technology’s ability to perform cognitive and experimental tasks like humans do, but at greater speed.

On one hand, AI has increased the efficiency of many tasks. Google’s AI company, DeepMind, for example, has worked with the United Kingdom National Health Service to program computers to identify patterns in medical scans, effectively diagnosing illnesses like cancer and heart disease. Computers have been programmed to invest in startups and turn them into companies worth millions of dollars. AI has reached farmers, who are working with scientists to maximize output while protecting land by monitoring foliage and soil health.

Artificial intelligence has staked its claim on modern society, but the unknown consequences of future developments continue instilling uncertainty and fear in people. Influential figures such as Bill Gates, Elon Musk, and Stephen Hawking have mentioned the issue with extending AI use without understanding the risks.

The greatest controversy is how AI makes its own subjective decisions and the possibility of losing control of AI programs. One of the first areas of concern was targeted pop-up advertisements, which became increasingly prevalent since 1995.

“Targeted advertising is a big area where companies use what they know about you to sell you something,” said Alexander Warren ’19. “In my opinion, it’s immoral, and using AI violates users’ privacy.”

Since then, AI has developed further and had more dangerous encounters with humans. Uber, Waymo, and Google have all had accidents involving their self-driving cars and automated decisions to merge, accelerate, and stop. These accidents could have been avoided by attentive human drivers. Governments have claimed to use facial recognition to find missing people, criminals, and minimize identity fraud. However, many systems have algorithms that exhibit racial and gender biases unless programmed to do otherwise. Should the system identify the wrong person behind a crime, it can be disastrous for the innocent individual involved.

This can be a problem in the criminal justice system, for instance, when courts need to use AI to determine the amount of bail, the sentence, and the date of parole of an offender. These are subjective topics that should be analyzed by the objectivity of moral juries and judges, not technology which looks at patterns and works by simplifying the information that it is given. It only works on the information that it has, so there is also the chance of that information being biased and unfiltered, or simply wrong.

Artificial intelligence has staked its claim on modern society, but the unknown consequences of future developments continue instilling uncertainty and fear in people today. The overlying issue with AI is that the goals of AI programs may not be completely aligned with that of its human designers, and there may not be an override for humans to control the program when things go wrong.

“Modern AI algorithms are so complex that even the people who design them don’t understand how they make decisions,” said Darius Barbano ’19. “If we are to trust these algorithms with sensitive information relating to anything from personal to national security information, there’s no doubt that we should be cautious about safety.”

Christina Pan is a Copy Chief for ‘The Science Survey’ and an Athletics Section Reporter for ‘The Observatory.’ This is her second year writing...